Kubernetes

Posit Connect can be configured to run on a Kubernetes cluster within AWS. This architecture is designed for the reliability and scale that comes with a distributed, highly available deployment using modern container orchestration. This architecture is best suited for organizations that already use Kubernetes for production workloads or have specific needs that are not provided by our high availability (HA) architecture.

Architectural overview

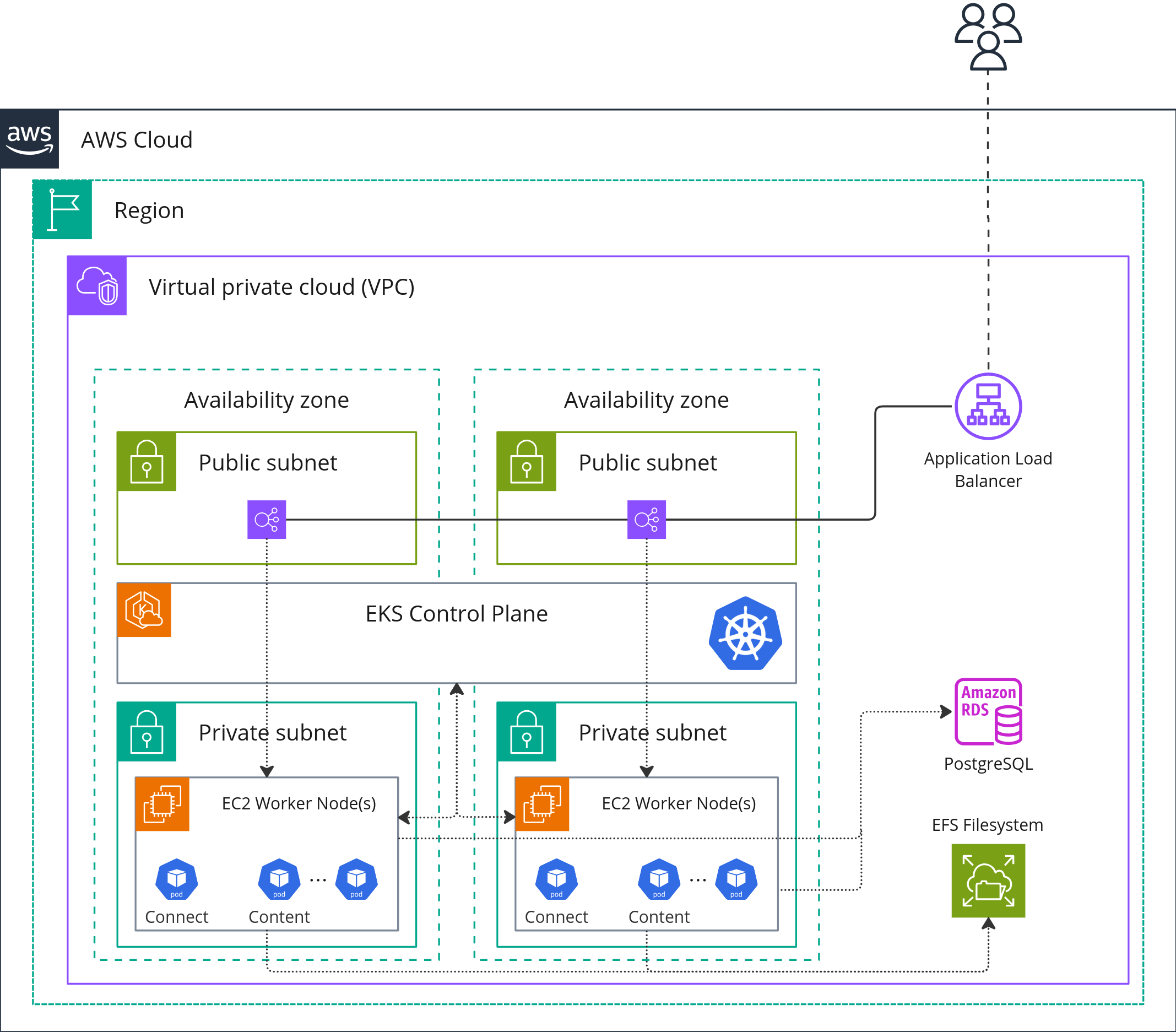

This deployment of Posit Connect utilizes Off-Host Execution and uses the following AWS resources:

- AWS Application Load Balancer (ALB) to route requests to the Connect service.

- AWS Elastic Kubernetes Service (EKS) to provision and manage the Kubernetes cluster.

- AWS Relational Database Service (RDS) for PostgreSQL, serving as the application database for Connect.

- AWS Elastic File System (EFS), a networked file system used to store file data, which is mounted across the Connect services.

For detailed instructions on installation and configuration, refer to the off-host execution section in the Posit Connect Admin Guide: Off-Host Execution Installation & Configuration.

Architecture diagram

Cluster

The Kubernetes cluster can easily be provisioned using AWS Elastic Kubernetes Service (EKS).

Nodes

We recommend at least two worker nodes of instance type t3.2xlarge, but both the number of nodes and the instance types can be scaled up for more demanding workloads. Your instance needs will depend on the size of your audience for Connect content as well as the compute and memory needs of your data scientist’s applications.

- Worker nodes should be provisioned across more than one availability zone and within private subnets.

Database

This configuration utilizes an RDS instance with PostgreSQL running on a db.m5.large instance, provisioned with a minumum of 15 GB of storage and running the latest minor version of PostgreSQL 15 (see supported versions). Both the instance type and the storage can be scaled up for more demanding workloads.

- The RDS instance should be configured with an empty PostgreSQL database for the Connect metadata.

- The RDS instance should be a Multi-AZ deployment and should use a DB subnet group across all private subnets containing worker nodes for the EKS cluster.

Load balancer

This architecture utilizes an AWS Application Load Balancer (ALB) in order to provide public ingress and load balancing to the Connect service within EKS.

- The ALB must be configured with sticky sessions enabled

Resiliency and availability

This implementation of Connect is resilient to AZ failures, but not full region failures. Assuming worker nodes in separate availability zones, with Connect pods running on each worker node, a failure in either node will result in disruption to user sessions on the failed node, but will not result in overall service downtime.

We recommend aliging with your organizational standards for backup and disaster recovery procedures with the RDS instances and EFS file systems supporting this deployment. These two components, along with your Helm values.yaml file are needed to restore Connect in the event of a cluster or regional failure.

Performance

The Connect team conducts smoke and performance testing on this architecture. During our testing, we published a Python-based FastAPI and an R-based Plumber application (using jumpstart examples included in the product) to Connect, simulating a load of 3000 concurrent users.

Based on our performance test results, the system’s response times meet acceptable criteria for the majority of users. The 99th percentile response times were below our toleration threshold of 1.5 seconds.

These tests demonstrate the capability of Connect to manage and serve applications to users. However, it’s important to note that the computational footprint of the content used in testing was minimal. For most Connect installations, the majority of computational power is dedicated to the Python and R content that publisher-users deploy, rather than Connect itself. If your team is deploying lightweight apps, APIs, and jobs to Connect, our testing results are likely to be applicable. However, if your team is deploying APIs or apps that involve heavy-duty data processing, machine learning, or other computationally intensive tasks, you may need larger or more compute optimized EC2 and RDS instances, but upgrading other architecture components may not be necessary.